| Google's Computer Vision Box Just $45 |

| Written by Harry Fairhead | |||

| Friday, 01 December 2017 | |||

|

Google has just announced a new AIY kit to add to its existing voice/AI kit. This takes a Raspberry Pi Zero W, yes a Zero, and turns it and its camera into a neural network vision system. There are two things that are remarkable about this - one the $45 price tag and two no cloud connection is needed as all computations are done in the box!

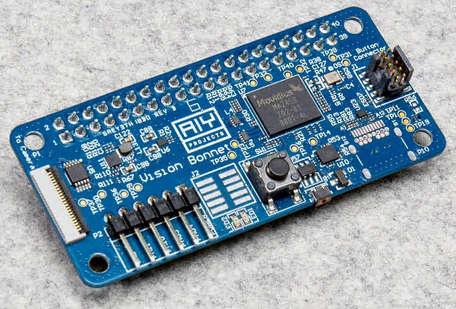

When I first heard of Google's new kit I thought I knew instantly what it was all about. A Raspberry Pi Zero W, which isn't included in the kit, just isn't powerful enough to run a neural network, so obviously it is just acting as a frontend to a cloud-based AI service which is doing all of the heavy lifting.This would have been fun, but hardly amazing and it would lock the user into another Google service. I was very wrong. The AIY Vision Kit is based on the Intel Movidius MA2450 vision processing unit, which is capable of implementing trained neural networks. The MA2450 has been built into the VisionBonnet board which is a custom Pi expansion card - the joke by the way is that Pi expansion boards are called HATs and hence Bonnet.

The VisionBonnet can compute the result of applying a neural network to an image from the Raspberry Pi camera (also not included in the kit) at 30 frames per second. It comes with three pretrained models:

How useful these models are going to be in any given application is difficult to say, but if your mind isn't already thinking up things you could do with just these three they you probably aren't in the market for this sort of kit.

The most important thing is that, while the computation is all done onboard, Google has provided the TensorFlow code to train new models. Once trained the model can be downloaded into the VisionBonnet and it will recognize whatever you trained it on. This is exciting and for the first time, as far as I know, provides a facility that could change the entire IoT, and intelligent gadgets in general. You could train multiple models to do different jobs and equip a robot with a set of eyes, each sensitive to some particular object or range of objects. This may be exciting, but don't underestimate the effort you will have to go to to train a new model. You need thousands of labeled samples and hundreds of hours of GPU/CPU time - which isn't cheap. As it is TensorFlow you don't have to use Google's cloud - you could even use your own server farm to do the job. The only bad news is that it isn't available yet, but it is promised in the US sometime this month (31st December is the estimate). Let's hope that Google makes the VisionBonnet available as a separate product. This one could be a breakthrough technology.

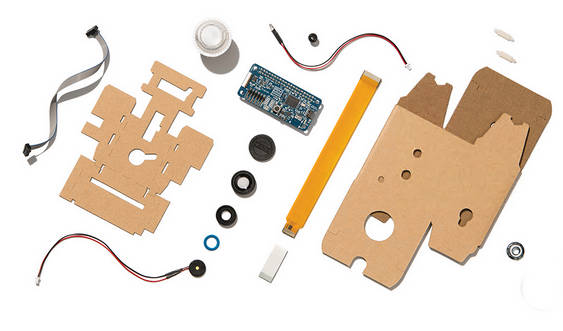

And one last thought - what is it with Google and cardboard? More InformationIntroducing the AIY Vision Kit: Add computer vision to your maker projects Related ArticlesGoogle AIY Cardboard And Raspberry Pi AI Militarizing Your Backyard with Python and AI OpenCV 3.0 Released - Computer Vision For The Rest Of Us To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Friday, 01 December 2017 ) |