| Gordon Bell Prize For Simulating The Earth's Interior |

| Written by Sue Gee | |||

| Wednesday, 02 December 2015 | |||

|

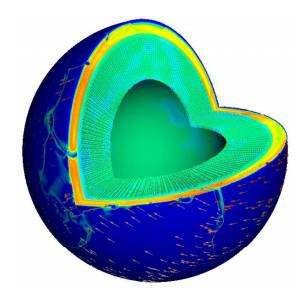

The winners of this year's ACM Gordon Bell Prize presented their research, that realistically simulates current conditions of the Earth’s interior, at SC15, the annual international supercomputing conference, held in November in Austin, Texas. This prize, worth $10,000 funded by Gordon Bell, a pioneer in high-performance and parallel computing, is awarded each year to recognize outstanding achievement in high-performance computing. According to the ACM: The purpose of the award is to track the progress over time of parallel computing, with particular emphasis on rewarding innovation in applying high-performance computing to applications in science, engineering, and large-scale data analytics. The 2015 award has been made for advances in scalability to a group of ten researchers: Costas Bekas , Alessandro Curioni, Omar Ghattas, Michael Gurnis, Yves Ineichen, Tobin Isaac, A. Cristiano I. Malossi, Johann Rudi, Peter W. J. Staar, Georg Stadler The scientists, whose paper has the title: “An Extreme-Scale Implicit Solver for Complex PDEs: Highly Heterogeneous Flow in Earth’s Mantle,” are affiliated to University of Texas at Austin, IBM Research, New York University and the California Institute of Technology and together have been involved in research that could herald a major step toward more accurately predicting earthquakes and volcanic eruptions. The platform they used for the advanced analytics involved was the “Sequoia” IBM BlueGene/Q located at the Lawrence Livermore National Laboratory, one of the fastest supercomputers in the world. According to IBM, the central challenge in designing realistic simulations of the Earth’s core, including the flow of mantle, lies in the trillions of variables required to produce an accurate computer model, ranging from the thickness of the plates to the viscosity of the mantle. Only a few years ago, most experts considered realistic simulations of mantle convection inconceivable. However, this team devised algorithms and a mathematical approach called an implicit solver to realistically simulate the most extreme topographic features on the Earth’s surface for the first time. The abstract of the paper presented at SC15 states: To maximize accuracy and minimize runtime, the solver incorporates a number of advances, including aggressive multi-octree adaptivity, mixed continuous-discontinuous discretization, arbitrarilyhigh-order accuracy, hybrid spectral/geometric/algebraic multigrid, and novel Schur-complement preconditioning. These features present enormous challenges for extreme scalability. We demonstrate that—contrary to conventional wisdom—algorithmically optimal implicit solvers can be designed that scale out to 1.5 million cores for severely nonlinear, ill-conditioned, heterogeneous, and anisotropic PDEs. One of the recipients, Omar Ghattas, Professor of Geological Sciences and of Mechanical Engineering and Director of the Center for Computational Geosciences at the Institute for Computational Engineering and Sciences, University of Texas at Austin commented: “While the conventional view is that the goal of efficiently solving highly nonlinear equations on millions of cores is not possible, we demonstrated that, with a careful redesign of discretization, algorithms, solvers and implementation, this goal is indeed possible". Costas Bekas, manager of Foundations of Cognitive Solutions, IBM Research - Zurich added: “We have only begun to demonstrate and explore how Big Data, advanced algorithms and supercomputing can be combined to realistically simulate the most extreme nonlinear, heterogeneous forces of nature. We envision applying the extreme availability of field sensor data and cognitive computing to absorb all of the knowledge there is on a topic and enabling practitioners to reduce the time to solution from years to weeks and even days for everything from inventing a new material to discovering an untapped source of energy.” The simulations were performed on Sequoia, which consists of 96 IBM BlueGene/Q racks, reaching a theoretical peak performance of 20.1 petaflops. Each rack consists of 1,024 computer nodes, hosting 18 core POWER processor chips designed for big data computations that are running at 1.6 GHz. The achievement reached an unprecedented 97 percent software scalability efficiency, a new world record. Without these advancements, complex simulations of this size could have not been performed.

More Information“An Extreme-Scale Implicit Solver for Complex PDEs: Highly Heterogeneous Flow in Earth’s Mantle, Related ArticlesDARPA Wants Analog To Boost Super Computer Performance TensorFlow - Googles Open Source AI And Computation Engine

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on, Twitter, Facebook, Google+ or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Wednesday, 02 December 2015 ) |