| Automatic High Dynamic Range (HDR) Photography |

| Written by David Conrad | |||

| Monday, 20 April 2015 | |||

|

If you enjoy photography you have probably experimented with HDR. The usual method is to just guess the bracketing exposures needed. Now we have an algorithm that can produce the best results with the fewest additional exposures.

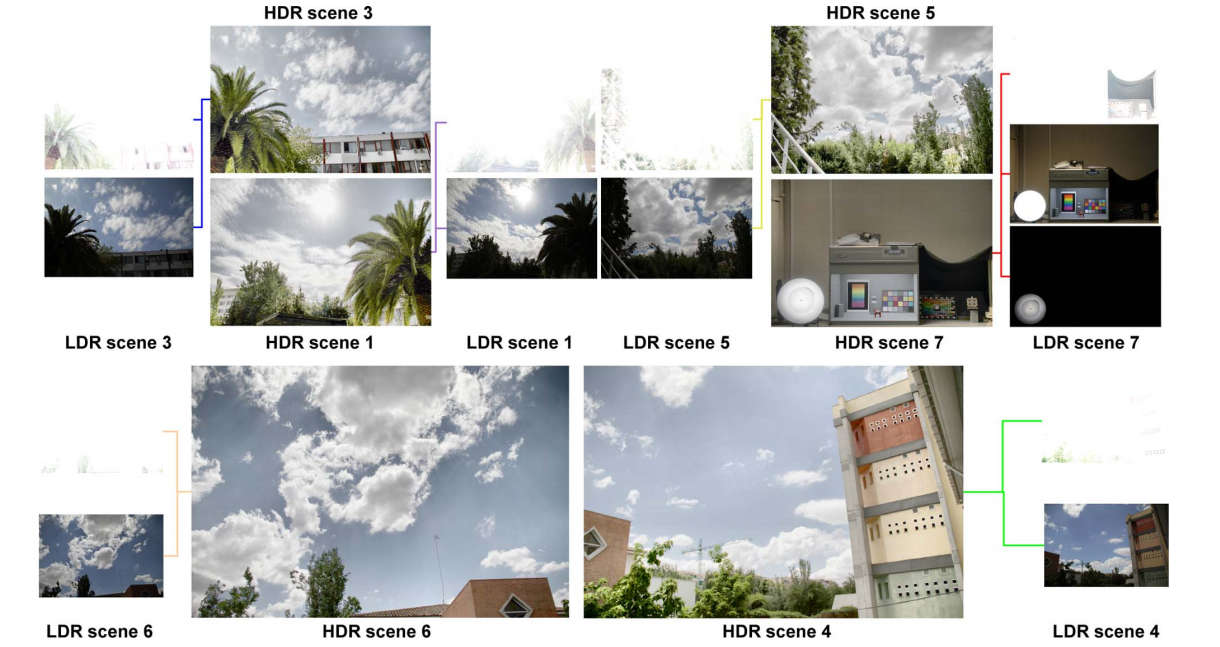

The human eye can see a remarkable range of brightness levels - far more than a typical camera can record. For example, if you look at a building against a bright sky then you can see both, but when you take a standard Low Dynamic Range (LDR) photo you either have to expose for the sky, when the building is very dark; or for the building, when the sky is completely white. That is, you have to choose between underexposing the building or overexposing the sky. The HDR approach takes additional exposures so as to capture the different areas of the scene correctly. Then software uses the stack of exposures to compute a single photo with an extended dynamic range, i.e. a High Dynamic Range image. The idea is simple enough, but there are complications. When you are actually taking the photo, the question is what additional exposures do you need to ensure that you capture the range of brightness present in the scene? The correct way of doing the job is to take a light meter and measure the light in the brightest and darkest parts of the scene - but who has a separate light meter these days and using the metering built in to a DLSR isn't that easy. As a result what most photographers do is to take fairly arbitrary "bracketing" exposures above and below the exposure established automatically by the camera. This usually works but it's not perfect and sometimes it can be wasteful of exposures, which is of course wasteful of time. The new algorithm selects just the exposures necessary to capture the full dynamic range of the scene and the idea is easy enough to implement as a real time procedure in a standard camera. The idea is that you take a single exposure as determined by the camera's automatic exposure mechanism. Any pixels that are below a set threshold are underexposed - i.e. black with no detail, and any that are above a set threshold are over exposed - i.e. white with no detail. If there are only an insignificant number of under or overexposed pixels then there is no need for an HDR image. If there are underexposed pixels then the camera's response curve is used to compute the exposure needed to move the underexposed pixels to just below the overexposed threshold. This is reasonable because all of the pixels above the underexposed threshold are perfectly exposed and we don't need any more information about them so they can be moved into the overexposed region. The same trick is used with the population of overexposed pixels - the camera's response curve is used to move them down to just above the underexposed threshold. As before, the pixels that we have adequate information on are moved to be underexposed. This approach gives the best coverage of the brightness of the scene without missing information on any pixels. Of course, you can continue the process, checking the new exposures for under and over exposed pixels, until there are so few it isn't worth continuing.

The method works and now all we need is some enterprising camera manufacturer to build it into a camera - or failing that some open source firmware to offer it. More InformationMiguel A. Martínez, Eva M. Valero, and Javier Hernández-Andrés, Color Imaging Laboratory, Department of Optics, Faculty of Sciences, University of Granada. Related ArticlesNew Algorithm Takes Spoilers Out of Pics Computational Photography On A Chip The HDR Book 2nd Ed (Peachpit Press) Advanced High Dynamic Range Imaging SparkleVision - Seeing Through The Glitter

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Monday, 20 April 2015 ) |