| Analytics Big Bang |

| Written by Alex Armstrong |

| Tuesday, 02 July 2013 |

|

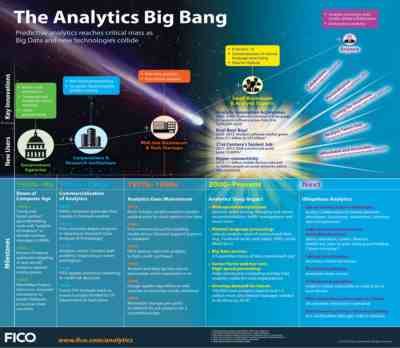

An infographic picks out highlights from the past, present and future of predictive analytics. But is this big data or simply statistics? What counts as big data? If you consider it as a data set that is too large to handle using existing methods then our definition is always going to be expanding. The early history of computing was driven by the need to perform calculations, either in the context of war or to gain a competitive edge. One point I would have added, even further to the left, would have been the way in which the US Census Bureau's requirement for tabulated data led to the success of the Herman Hollerith's punch card machines at the end of the C19th. Then in 1950 the US Census Bureau placed the first commercial order for a computer - Mauchly and Eckert's UNIVersal Automated Computer, UNIVAC, in 1946. It is interesting to be reminded by the infographic just how long we've been concerned with web analytics, which we can now see as dating from an era when the volume of data being created was tiny by today's standards.

Click for hi-res version (pdf)

The top part of the infographic charts the trend by which big data has moved from being the preserve of government agencies and big business, through mid-size companies to small businesses and analytics experts today and envisages that in the future anyone will be able to access it. The "Big Bang" aspect is the explosion not only in the amount of data but the demand for analysis and the ability to analyze it. The infographic mentions R, Hadoop and Natural language processing but could have included Deep Neural Nets and Machine Learning. One point it makes that is not in doubt is that there is a growing demand for people with the ability to make sense of data and if you can call yourself a data scientist you can expect to make big bucks while the hype surrounding big data persists. There are many worries though. In particular "big data" techniques are often found in use where the same problem could be tackled using just a single powerful machine and a stats language such as R. Using Hadoop doesn't make the data big. The real worry is that "big data" goes with new techniques, which is fine as long as this isn't an indication of ignorance of the old techniques. Statistics is still the bedrock on which all data analysis should sit.

More InformationRelated ArticlesData Scientists: Highly Skilled and In Demand UNIVAC Predicts US Election - On This Day 60 Years Ago

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

| Last Updated ( Tuesday, 02 July 2013 ) |