| NVIDA Updates Free Deep Learning Software |

| Written by Mike James |

| Monday, 15 May 2017 |

|

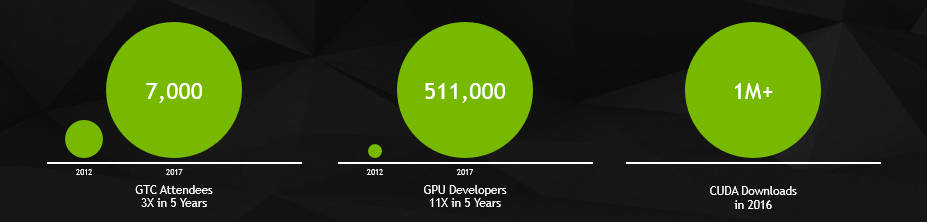

There was a time when NVIDA was thought of as a maker of hardware for high end games machines, but the need for powerful number crunching has propelled the GPU maker into new areas and now it is one of the leaders in AI. NVIDA has some help for you if parallel computation on a GPU is your concern. Of course it has to be an NVIDA GPU card you are targeting, but you wouldn't expect them to promote someone else's hardware, would you? The first piece of news, however, is that the number of people interested in using the GPU for computation is growing very fast:

This is an indication of just how useful GPU approaches to general computation are. NVIDIA has been helping the interest along with its free SDK and it has just announced a new version with these improvements:

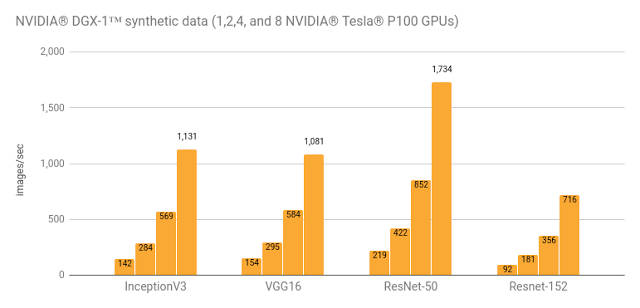

Notice that most of these speed ups are credited to the latest Volta GPU architecture from NVIDA. Volta is designed specifically for data processing and AI in particular. With 640 Tensor cores it is claimed to offer 100 Teraflops per second. Google recently published some benchmarks which show that Tensorflow almost scales linearly with the number of Volta P100 GPUs actually used:

You can't help but think "this would be good for graphics!" Silly idea! Why waste computing power on graphics? Can anyone remember what the G in GPU stands for these days?

More InformationNVIDIA Delivers New Deep Learning Software Tools for Developers TensorFlow Benchmarks and a New High-Performance Guide Related Articles//No Comment - BB8 autonomous car NVIDIA's Neural Network Drives A Car A Billion Neuronal Connections On The Cheap To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

| Last Updated ( Monday, 15 May 2017 ) |