| Artificial Intelligence - Strong and Weak |

| Written by Alex Armstrong | |||||||

| Monday, 04 May 2015 | |||||||

Page 3 of 3

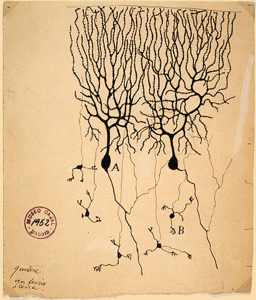

Neural NetworksThere are many more side tracks of the symbolic or engineering approach to AI but the time has come to consider the only other real alternative – connectionism and neural networks. One of the main characteristics of human intelligence is the ability to learn. Animals also have this ability but many of the symbolic programs can’t learn without the help of a human. Very early on in the history of AI people looked specifically at the ability to learn and tried to build learning machines. The theory was that if you could build a machine that could learn a little more than you told it you could “bootstrap” your way to a full intelligence equal to or better than a human. After all this is how natural selection worked to create organisms that could learn more and more. One of the first attempts at creating a learning machine was the Perceptron. In the early days it was a machine but today it would be more easily created by writing a program. The simplest perceptron has a number of inputs and a single output and can be thought of as a model of a neuron. Brains are made of neurons, which can be thought of as the basic building block of biological computers. You might think that a good way of building a learning machine would be to reverse-engineer a brain, but this has proved very difficult. We still are not entirely certain how neurons work to create even simple neural circuits but this doesn’t stop us trying to build and use neural networks! The basic behaviour of a neuron is that it receives inputs from a number of other neurons and when the input stimulus reaches a particular level the neuron “fires”, sending inputs on to the neurons it is connected to. The perceptron works in roughly the same way. When the signals on its inputs reach a set level its output goes from low to high. In the early days we only knew how to teach a single perceptron to recognise a set of inputs. The inputs were applied and if the perceptron didn’t fire when it was supposed to it was adjusted. This was repeated until the perceptron nearly always fired when it was supposed to. This may sound simple but it was the first understandable and analysable learning algorithm. In fact it was its analysability that caused it problems. The perceptron was used in many practical demonstrations where it distinguished between different images, different sound, controlled robot arms and so on but then it all went horribly wrong.

This is the book that killed research into neural networks for 15 years!

Its downfall was a book by Marvin Minsky and Seymour Papert that analysed what a perceptron could do and it wasn’t a lot. There were just too many easy things that a perceptron couldn’t learn and this caused AI researchers to abandon it for 15 years or more. During this time the engineering symbolic approach dominated AI and the connectionist learning approach was thought to be the province of the crank. Then two research groups simultaneously discovered how to train networks of perceptrons. These networks are more generally called “neural nets” and the method is called “back propagation”. While a single neuron/perceptron cannot learn everything - a neural network can learn any pattern you care to present to it. The whole basis of the rejection of the connectionist approach to AI was false. It wasn’t that perceptrons were useless, you just needed more than one of them! Unfortunately as with most AI re-births this one grew too quickly and more than could be achieved was being promised for the new neural network approach. Now things have settled down the neural net is just one of the tools available for learning to solve problems and we are making steady progress in understanding it all. Some of the connectionist school are working on the weak AI problem and applying their ideas to replace human intelligence in limited ways. Currently the symbolic approach still rules in some areas. There are no chess-playing neural networks that get very far but there is a neural net that can beat most backgammon players! Even more recently computing power has grown to a point where neural networks can be implemented with lots of neurons and lots of connections and these can be trained with huge quantities of data courtesy of the web. What was discovered was that the connectionists had been right all along. Their early networks didn't work well because they were too small and trained with far too little data. Even today training a neural network can take days and huge amounts of data but the final results are often remarkable. Neural networks, so called convolutional neural networks, can recognize images with the accuracy of a human and better than a human in many cases. The list of things that neural networks can do and the success that they have in learning tasks is astonishing. As a result AI is on the rise - but is it strong or weak? We know how a neural network does the job it does. There are some things we would like to know, such as how to optimize the training and we would like to find out exactly how they recognize something e.g. a cat. We would like to know what features they use to recognize a cat and there are techniques to find out. So given that we understand neural networks well enough - can they reach the status of real intelligence? The answer is - perhaps. At the moment the neural networks that we use are very small compared to the size of a human brain. At the moment they are still in the relm of the Watt governor - mechanisms. What we cannot be sure about is what happens when they get bigger, much bigger. Perhaps there is an emergence of human like intelligence when neural networks get to a particular size. What is that size? My guess is that it is just a bit bigger than the biggest neural network we can understand. For now we are very definitely working with weak AI and if anyone trys to tell you otherwise then they are wrapping up their creation in Sci Fi cloths to try and get your attention and possibly your money. At the moment all of the AI systems we have created are mechanisms that do some job that humans do. True intelligence is still a long way over the horizon. Google's Deep Learning - Speech Recognition

A Neural Network Learns What A Face Is Speech Recognition Breakthrough To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||||||

| Last Updated ( Tuesday, 05 May 2015 ) |