| DNA-Net - Machine Learning for Designer Offspring? |

| Written by Sue Gee | |||

| Saturday, 23 November 2019 | |||

|

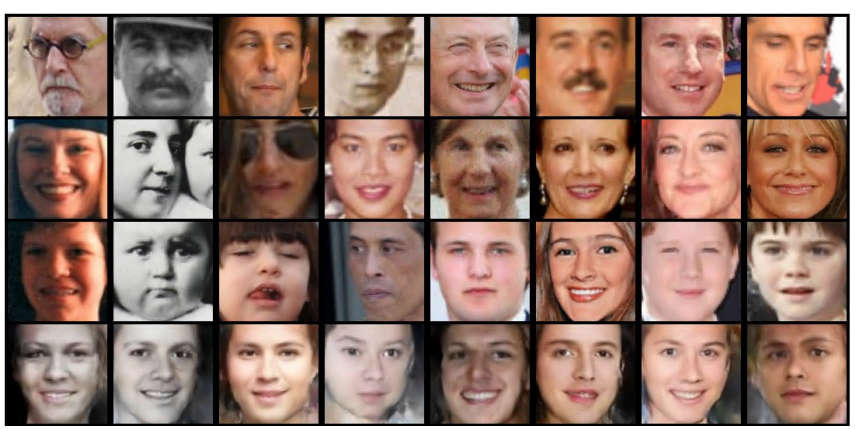

Want to know what your offspring with a given other might look like? Now a neural network can tell you. But do you really want to know? Machine learning is now being applied to visual kinship recognition. Researchers have proposed and tested a face generation model to predict the appearance of a child given the parents. Their experiments validate that it synthesizes convincing results using both subjective and objective standards. The research comes from Northeastern University and is led by Professor Yun Raymond Fu. It tackles the task of kin-face generation with the aim of predict the appearance of a child from a pair of parents conditioned on high-level features (i.e. age and gender), which provides control over the desired characteristics. In this set of photos the top row shows two (real) parents and in the bottom row one of the photos is generated and the other three are real. The paper challenges readers to identify the generated image - but unfortunately doesn't provide the answer! The introduction to the paper "What Will Your Child Look Like? DNA-Net: Age and Gender Aware Kin Face Synthesizer" states that visual kinship recognition aims to identify blood relatives from facial images and that it has practical application from video surveillance and law-enforcement to automatic family album management. It goes on to explain that the process of inheritance can be generalized in two main steps:

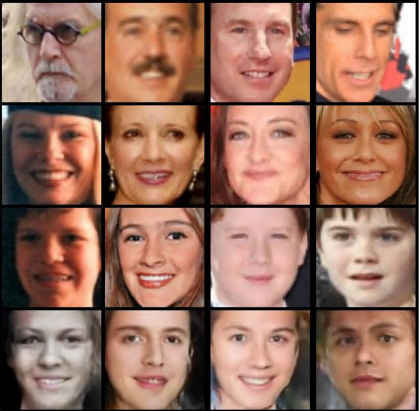

This means that children are not identical to a single parent but tend to resemble both parents in various ways. The proposed kinship generation model has a two-step learning procedure inspired by the genetic process: Step 1: a deep generative Conditional Adversarial Autoencoder (CAAE) is trained on a large-scale face dataset to learn to map facial appearance to high-level features with knowledge of age and gender. Step 2: a novel DNA-Net, trained on a smaller kinship dataset, transforms high-level features to genes, i.e. translates genes of a parent pair to a child. The researchers were able to generate multiple siblings by manipulating the gene codes in DNA-Net, to allow for changes to be made to the generated child in both age and gender. Sample results are shown below. Each column corresponds to a family, with faces of fathers on first row, mothers on second, real children on third, and generated children on bottom.

In the evaluation phase, using 30 sets of images 35 human participants were asked to vote on which child images that were thought to be the true child of a pair of parents. They were not made aware that some of the pictures were generated rather than real. To quote the paper: The generated children obtained more votes than the actual. Specifically, about 60.29% of the generated stumped the user into believing it was the true child, which was measured by the number of votes. Thus, the faces generated by the proposed appeared more genuine than that of the actual child to humans. The researchers conclude: Quantitative and qualitative experimental results show the generated children faces have high similarity with parents as well as similar heritability with real children. Our study could be useful in a variety of applications, ranging from population genetics and gene-mapping studies, to face modeling and reconstruction applications. This research may have led to an interesting model but, as with other machine learning studies using images, there is also the potential for using such deep fakes in a misleading way. And how about selecting your partner on the grounds of what your offspring might look like. A scary thought.

More InformationWhat Will Your Child Look Like? DNA-Net: Age and Gender Aware Kin Face Synthesizer Related ArticlesGANalyze - What Makes Pictures Memorable? GANs Create Talking Avatars From One Photo How Old - Fun, Wrong, Potentially Risky? To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Saturday, 23 November 2019 ) |