| AI Helps Map A Brain |

| Written by Mike James | |||

| Tuesday, 06 August 2019 | |||

|

It is ironic that our best hope of understanding natural intelligence might be to enlist the help of artificial intelligence. Google has just reported mapping the entire brain of a fruit fly using neural networks to do the processing. This is a big leap for fly kind.

Until recently the nematode worm C. elegans was the best candidate for an AI pinup, but you might have guessed that the workhorse of biology, the fruit fly Drosophila melanogaster might manage to muscle in on the act. Now Google Research has posted a blog and a paper that explains how it has managed to map the entire brain of this small creature. This is a big step forward because previously we only managed to map nervous systems involving tens of neurons: "An important advantage of flies is their size: Drosophila brains are relatively small (one hundred thousand neurons) compared to, for example, a mouse brain (one hundred million neurons) or a human brain (one hundred billion neurons)." Even so moving to one hundred thousand neurons is a lot of neurons and a lot of connections. Almost as interesting is how the feat was accomplished.

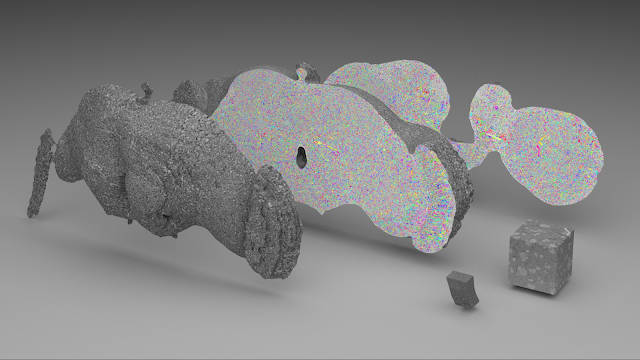

The fly brain was sectioned into thousands of 40 nm slices and each one was scanned by an electron microscope. The, 40 trillion pixel images were then aligned to create a 3D image. This might seem like the end of the story, but a 3D image doesn't give you the wiring diagram. To construct this a cloud of Tensor Processing Units (TPUs) was used to apply a Flood-Filling Network (FFN) which traced each neuron. However the basic FFN tended to get lost when there was missing data in the form of missing slices. To help with this problem a neural network was trained to "hallucinate" the missing slices. Hallucinate isn't my word but the research teams. I think it is better to think of it as 3D in-painting, with the network attempting to fill the missing data so that it fits in with the slices that we do have. This allowed the FFN to follow connections more accurately. As well as the data, they also created a viewer - Neuroglancer: an open-source project (github) that enables viewing of petabyte-scale 3D volumes, and supports many advanced features such as arbitrary-axis cross-sectional reslicing, multi-resolution meshes, and the powerful ability to develop custom analysis workflows via integration with Python. You can see Neuroglancer in action in this video: I have to admit that the only thing this video did for me was to make me feel overwhelmed at the amount of data. It seems clear that we not only need AI's help with creating these models and visualization, we also need some help in understanding it all. There are plans for more work: Our collaborators at HHMI and Cambridge University have already begun using this reconstruction to accelerate their studies of learning, memory, and perception in the fly brain. However, the results described above are not yet a true connectome since establishing a connectome requires the identification of synapses. We are working closely with the FlyEM team at Janelia Research Campus to create a highly verified and exhaustive connectome of the fly brain using images acquired with “FIB-SEM” technology. There is still a long way to go before there is even a hint of a convergence between artificial and natural neural networks. In fact, it isn't even particularly clear what "to understand" ??a fly's brain means.

More InformationAn Interactive, Automated 3D Reconstruction of a Fly Brain Peter H. Li, Larry F. Lindsey, Michał Januszewski, Zhihao Zheng, Alexander Shakeel Bates, István Taisz, Mike Tyka, Matthew Nichols, Feng Li, Eric Perlman, Jeremy Maitin-Shepard, Tim Blakely, Laramie Leavitt, Gregory S.X.E. Jefferis, Davi Bock and Viren Jain. Google Research, Janelia Research Campus, Howard Hughes Medical Institute, MRC Laboratory of Molecular Biology, University of Cambridge and Johns Hopkins University. Related ArticlesNeurons Are Two-Layer Networks Worm Balances A Pole On Its Tail A Worm's Mind In An Arduino Body OpenWorm Building Life Cell By Cell To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Tuesday, 06 August 2019 ) |