| Inceptionism: How Neural Networks See |

| Written by Mike James | |||

| Saturday, 20 June 2015 | |||

|

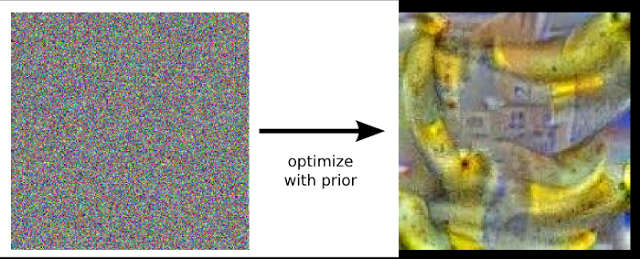

Neural networks achieve great things, but it is slightly worrying that we don't really know how they work. Inceptionism is an attempt to make neural networks give up their secrets by showing us what they see. It creates some amazing artwork along the way. You can look at the results of this work as pure art, but that would be missing the main message. It tells us not only how a neural network is seeing and recognising things but how similar it is to our own efforts. The basic idea is simple enough to understand. When you train a neural network each layer learns a set of features that become increasingly sophisticated as you move up the layers toward the output. The first layer recognizes edges and lines, say. The next puts the edges together to recognize rectangles, and other shapes. The next learns features that corresponds to assemblies of shape and so on. If you take a trained network and show it some random data then you can take the output and work out how the data needs to be changed to move in the direction that the network recognizes - for example as a banana. What is interesting is that this doesn't actually make the input image look more banana like to a human and so it isn't actually very useful. What the new technique ads to the mix is a prior constraint that the pixels in the input image have to have similar statistics to natural images. If you move in the direction of "banana" keeping the pixels statistics similar to what you find in natural images, then bananas do indeed appear.

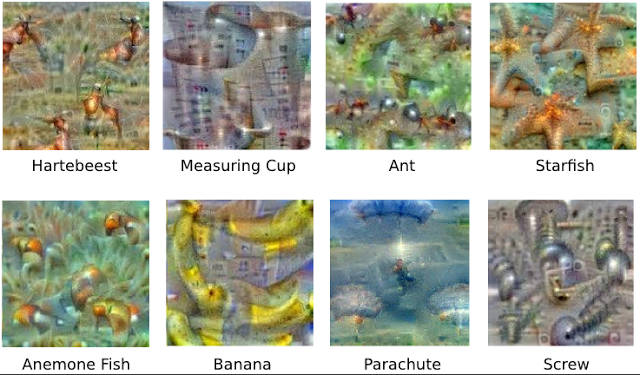

They look more like a dream of bananas, but the image is made up of what the neural network has extracted that represents banana-ness. You get similar dream like results when you try to reconstruct other objects:

These images are not only fun to look at, they are useful diagnostics of how the network is functioning. For example, the researchers discovered that one of their networks thought a dumbbell always had to have a human arm holding it:

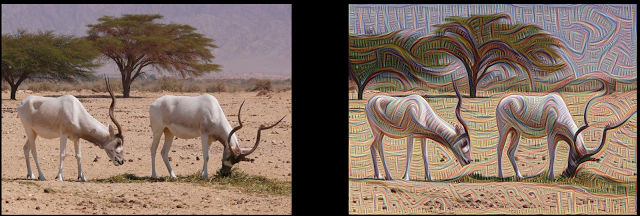

Obviously a dumbbell without a human lifting it had never been shown to the network so it failed to learn the idea of a dumbbell in isolation. In a second part of the experiment, rather than starting from a random input, a photo of something real was used and the optimization process was used to modify it to increase the output of one of the layers. If this is done for one of the lower layers then what is enhanced in the photo are low level features such as lines:

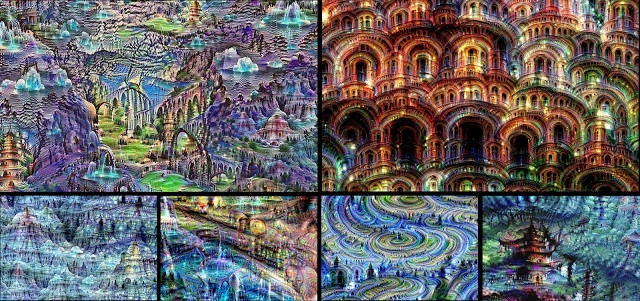

Left: Original photo by Zachi Evenor. Right: processed by Günther Noack, Software Engineer Move up the layers and the photo changes to bring out the higher level features that the network is detecting. The results really are like something from a hallucination or a surrealistic painting:

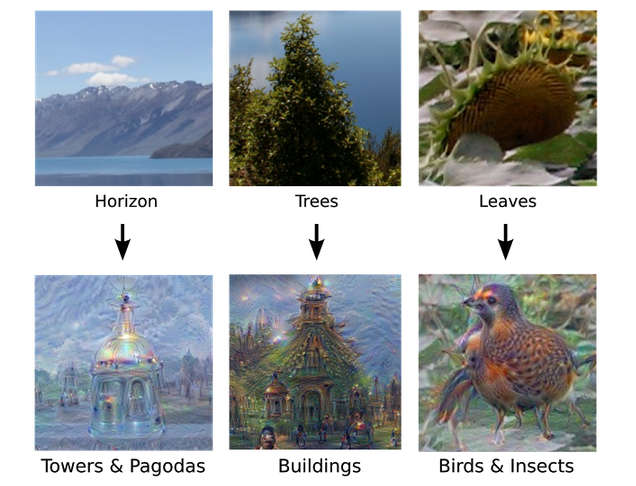

Feeding in images of clouds even produces an extreme version of what we all tend to see when daydreaming looking at the sky:

Finally when the team took these images and fed the output back into the input of the network the results became even more like hallucinations:

There are a number of things you can draw from these experiments. The first is that neural networks do contain enough information to reconstruct many of the things they have been shown. The large scale features that the networks use don't correspond to the sort of neat prototypes that we might imagine, but messy parts of things that can be put together in ways that the network has never seen - is this imagination or creativity? The work also suggests a way to resolve the problem of the existence of adversarial and misleading images that we previously discussed in The Deep Flaw In All Neural Networks. It is possible to alter the classification of an image by adding small values to a large number of pixels. The image looks the same to a human, but different to a neural network. Similarly, you can construct images that look nothing like the object that the neural network says they are. Both phenomena could be a result of the images not having a statistical distribution of value typical of "natural" images. Perhaps neural networks don't build this constraint in because they don't have to - every image they are presented with satisfied this condition automatically. Clearly this should be investigated. The pictures included here all come from the Inceptionism gallery which has many more and is well worth seeing. More InformationInceptionism: Going Deeper into Neural NetworksRelated ArticlesThe Deep Flaw In All Neural Networks The Flaw Lurking In Every Deep Neural Net Neural Networks Describe What They See Neural Turing Machines Learn Their Algorithms Google's Deep Learning AI Knows Where You Live And Can Crack CAPTCHA Google Uses AI to Find Where You Live Deep Learning Researchers To Work For Google Google Explains How AI Photo Search Works Google Has Another Machine Vision Breakthrough?

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Saturday, 20 June 2015 ) |