| Any Camera Can Capture Ultra Fast Action |

| Written by David Conrad | |||

| Sunday, 12 May 2019 | |||

|

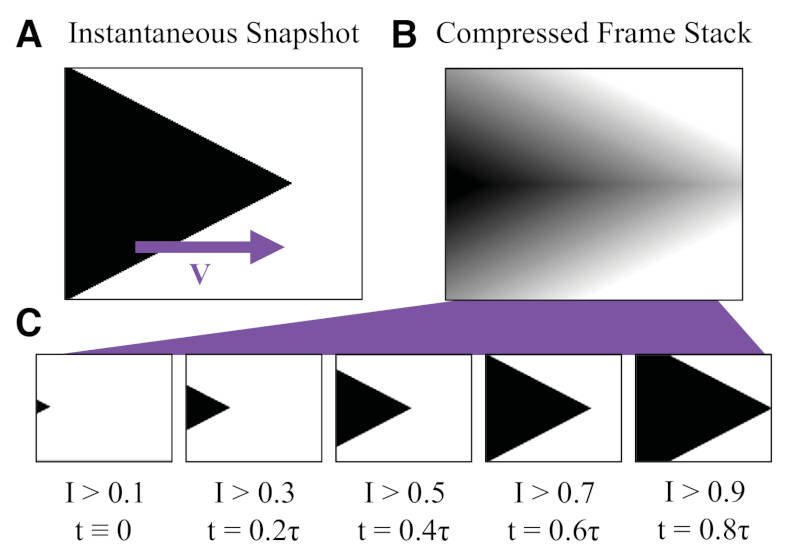

Ultra high speed videos of things like water droplets hitting the ground or balloons bursting are fascinating, but they need a high priced camera to render them as slowmo - or do they? Could computational photography be the only extra you need? Just when you thought that there was nothing amazingly new that computation photography could do, a team from the Ecole Polytechnique Fédérale De Lausanne has demonstrated that a fairly ordinary camera can capture high speed video and hence anyone can be that slowmo guy. How is this possible? The simple answer is that it is the blury bits that count. When you take a video of something moving fast it will change its position in the time a single frame is captured by the camera. It is this change in position that causes the blur that you associate with fast moving things taken by a standard camera. Sometimes the blur is interesting enough to create an artistic image, but it also contains information about how things are moving. To extract this information, the algorithm has to have some idea of what the object looks like before it starts moving and blurring. So the first step is to take a photo of the object at rest in such a way that all of the components that are going to create the blur can be seen. From this the algorithm can use the blur to create intermediate sharp frames that would have been caught if the camera's frame rate had been high enough. This does reduce the color depth of the image because shades of grey are being mapped to movement. We are trading color resolution for temporal resolution. You can see the general idea in the illustration taken from the paper:

If you have a black, triangular, fast moving object A then the image captured will be a blurred triangle as in B. If you threshold the image at increasing grey levels the result is C, a set of intermediate frames that are what you would have got if you had a high speed camera. Unfortunately the results are more of scientific interest than artistic wow factor. Here is a water drop striking a surface: The technique has been checked against a real high speed camera and it works. If you need to record an essentially binary high speed event then this is a low cost way to do it. Can we do any better? My guess is that there is more information in the blurred images than is being extracted. Perhaps a more complex temporal deconvolution? Is this another job for a neural network? Perhaps a neural network could dream the intermediate frames? More InformationThe virtual frame technique: ultrafast imaging with any camera

Related ArticlesPerfect Pictures In Almost Zero Light Using Super Seeing To See Photosynthesis Dreams Come To Life With Machine Learning Automatic High Dynamic Range (HDR) Photography To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Sunday, 12 May 2019 ) |