| The Paradox of Artificial Intelligence |

| Written by Harry Fairhead | |||

| Friday, 14 April 2023 | |||

Page 2 of 2

The point is that we use the word "intelligence" incorrectly. We seem to think that it is ascribing a quality to an entity. For example we say that an entity has or does not have intelligence. Well this isn't quite the way that it works in an operational sense. Intelligence isn't a quantity to be ascribed it is a relationship between two entities. If the workings of entity B can be understood by entity A then there is no way that entity A can ascribe intelligence to entity B. On the other hand, if entity B is a mystery to entity A then it can reasonably be described as "intelligent".

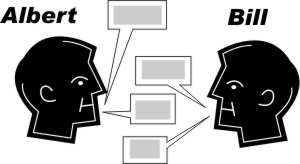

A has no idea how B works so the conversation is enough for A to say B is intelligent

However A is a computer scientist and discovers exactly how B works

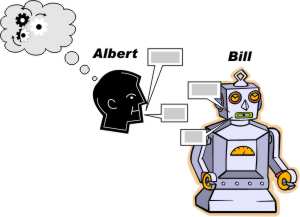

Intelligence is a relationship between A and B If you try this definition out then you can see that it starts to work. Entity A is unlikely to understand itself therefore it, and other entity As, ascribe intelligence to themselves and their collective kind. Now consider "Humans are intelligent" or "I am intelligent". True enough if spoken by a human, but if an advanced alien, entity C, arrives on the planet then it might understands how we work. With this different relationship C might not ascribe anything analogous to intelligence to us. Intelligence is a statement of our ignorance of how something works Looked at in this way it clearly demonstrates that attempts to create artificial intelligence is partly doomed to failure. As soon as you have the program to do the job - play chess say - you can't ascribe intelligence to it any longer because you understand it. Another human, however, who doesn't understand it might well call it intelligent. In the future you might build a very sophisticated robot and bring it home to live with you. The day you bring it home you would understand how it works and regard it as just another household appliance. Over time you might slowly forget its workings and allow it to become a mystery to you and with the mystery comes the relationship of intelligence. Of course with the mystery also comes the feelings that allow you to ascribe feelings, personality etc to this intelligent robot. Humans have been doing this for many years and even simple machines are given characters, moods and imputed feelings - you don't need to invoke intelligence for this sort of animism but it helps. Notice that this discussion says nothing about other and arguably more important characteristics of humans such as being self aware. This is not a relationship between two entities and is much more complex. Intelligence is something that doesn't stand a close inspection of how it works. So can AI ever achieve its goal? If you accept that intelligence is a statement of a relationship between two entities - then only in a very limited way. If intelligence is a statement that I don't understand a system and if AI is all about understanding it well enough to replicate it then you can see the self-defeating nature of the enterprise. Given that we cannot completely understand large language models does this mean that we might well consider them intelligent? The waters are muddied by the fact that we do understand the general principles if not the detail of how they work. People keep on stating that they are just autocomplete machines or they reproduce the statistics of natural language and so cannot possibly be intelligent but who says a sufficiently complex autocomplete cannot be unfathomable and deep and so could be regarded as intelligent? Same for sufficiently complex statistical models. After all to return to an earlier point we are finite state machines - how can we be anything else. That we can only regard a system as intelligent if we don't understand it is a nice (almost) paradox for a subject that loves such self referential loops. Related ArticlesThe Robots Are Coming - AlphaCode Can Program! Chat GPT 4 - Still Not Telling The Whole Truth Google's Large Language Model Takes Control The Year of AI Breakthroughs 2022 Can DeepMind's Alpha Code Outperform Human Coders? The Unreasonable Effectiveness Of GPT-3 Why AlphaGo Changes Everything Google's DeepMind Learns To Play Arcade Games Artificial Intelligence - strong and weak Alan Turing's Electronic Brain To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Friday, 14 April 2023 ) |

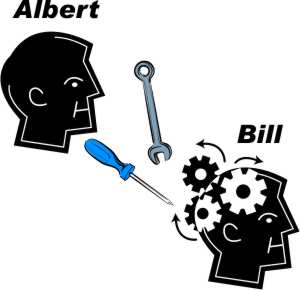

Knowing how B works A cannot assign "intelligence" to B even though nothing else has changed.

Knowing how B works A cannot assign "intelligence" to B even though nothing else has changed.