| Programmer's Guide To Theory - What Is Recursion |

| Written by Mike James | ||||

| Monday, 02 August 2021 | ||||

Page 1 of 3 So you know what recursion is, but do you know why it is? This is what this extract from Chapter 16 of my recent book is all about. A Programmers Guide To Theory

Now available as a paperback and ebook from Amazon.

Contents

Bonus Chapter: What Would P=NP Look Like? ***NEW!

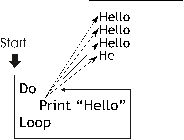

<ASIN:1871962439> <ASIN:1871962587> Recursion is interesting from the point of view of complexity theory and computer science in general because it is the natural way of implementing algorithms that take exponential time. This isn't because recursion is in any sense bad - it's just a more advanced form of the flow of control that makes itself useful when a problem needs it. One of the advantages of recursion is that it can be used to implement "divide and conquer" algorithms that are sub-polynomial in execution time. Recursion is not an essential concept in the sense that anything you can achieve using it you can achieve without it. If this were not the case then the Church-Turing thesis would be false. On the other hand, recursion is so powerful that it can be used as the basis for the very definition of what computation is. You can tell that attitudes to recursion vary greatly from "why do I need this" to "this is all I need", but the fact that is the subject of a well-known joke makes it harder to ignore. Definition of recursion: Recursion - see Recursion. Recursion is, loosely speaking, self-reference. There are some who just take to this idea and there are others, the majority, who always feel a little unsure that they have really understood how it all works. Recursion is important because it provides a way of implementing a type of algorithm that would otherwise be difficult. In many textbooks recursion is introduced as an advanced method and a few examples are given. Sometimes you get the impression that the topic has been included just for the reason that it is in other books. Usually the examples are problems that are already stated in recursive form and in this case a recursive implementation seems natural. What is generally overlooked is what recursion is good for. What makes a problem suitable for a recursive implementation? The answer is surprisingly simple and it reveals the link between recursion and algorithms that have exponential runtimes. Ways of Repeating ThingsBefore we move on to recursion we need to look at the most basic ways of repeating something, if only to make sure we are using the same vocabulary. When you first learn to program one of the key ideas is writing a set of instructions that repeats. For example, to print “Hello” repeatedly I’d use a loop: Do Print “Hello” You can think of the Loop as an instruction to go back to the Do and repeat all of the instructions between the two all over again. In this case you just go round and round the loop forever printing Hello each time. This is an "infinite” loop, or more accurately according to earlier chapters, a finite but unbounded loop. However, most loops in sets of instructions are finite loops which repeat a number of times. There are two broad types of finite loop, enumeration and conditional. The difference is that an enumeration loop repeats a given number of times, i.e. "print hello five times" is an enumeration loop, as is the familiar for loop found in most programming languages. A conditional loop keeps on repeating until a final end result is achieved, i.e. "stir the coffee until the sugar has dissolved" is a conditional loop, as are the familiar while or until loops found in most programming languages.

To state the obvious, a loop is called a loop, because it loops around the text of a program. There is a very deep sense in which every programmer thinks of a loop as a circular shape and the only differences between loops is how you get out of a loop. In principle, you only need a conditional loop because this can be used to implement an enumeration loop. In this sense a conditional loop is universal, much like the universal logic gate. |

||||

| Last Updated ( Tuesday, 03 August 2021 ) |