| Functional And Dysfunctional Programming |

| Written by Mike James | ||||

| Thursday, 15 November 2018 | ||||

Page 1 of 3 What is functional programming? Surely all our programs should function in some way or other? No - that's not what it means. Functional programming is altogether different....

What Programmers Know

Contents

* Recently revised

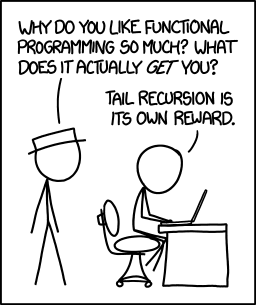

More cartoon fun at xkcd a webcomic of romance,sarcasm, math, and language

Math - don't you just love it. So much so that a lot of programmers wish that programming was more like math. There have been lots of attempts to make programming precise in the sense that programs can be verified just like a mathematical proof. The power of math is such that anything that makes messy programming look more like perfect math. But there are so many ways in which programming isn't like math at all. The big difference is that programming as we practice it mostly includes the ideas of time and state changes. A program isn't a static statement of some universal truth; rather it is a set of instructions that have a time sequence built in. A program doesn't even follow the same route each time it is executed. It reacts to the state of the environment around it and it can change its own state each time it is run. A program is so much more than a mathematical proof. If you want to confuse a mathematician then write on one side of the card: "The statement on the other side of this card is true" and on the other side: "The statement on the other side of this card is false" and hand it over. The result is usually a mathematician in melt down. A programmer on the other hand is happy to think that the state changes as the card is flipped. The statement on the side you are looking at is true. Flip the card over and the statement on the other side becomes false and so on. You have a flip flop that changes state as you turn the card over. Don't take this example too seriously because if you do you will miss the point and risk vanishing in a puff of logic. The difference between programming and math is that in programming you can, and probably must, write things like:

but in math this is just silly. So silly that you can use it to prove that 1=0 by subtracting x from both sides. This difference is one of the reasons beginners find programming difficult. Until they learn to see time as an element of what they read and write, it all seems very alien and the idea of a variable is mysterious. To be fair, math does have variables, but they are used in very restricted ways compared to the way they are used in programming; and where they are used more like as in programming then the math begins to look like programming. Math mostly deals with change by taking a snapshot and making everything static - what else is a function in the f(t) sense other than frozen time? It isn't a dynamic process but a subset of the Cartesian product of two sets. If this is too abstract think of a function as a graph or chart of f against t. Nothing dynamic here. |

||||

| Last Updated ( Monday, 26 November 2018 ) |