| Real World Adversarial Images |

| Written by Mike James | |||

| Wednesday, 09 August 2017 | |||

|

Just when you thought the the adversarial image flaw in neural networks couldn't get any worse someone comes along and shows how to build such images in the real world. Yes, a stop sign can be changed to a speed limit sign simply by adding some carefully crafted stickers or even some graffiti. This converts a lab curiosity into something potentially dangerous. When adversarial images where first discovered it was something of a shock. You could take an image and add some small value perturbations that look like noise and a neural network would misclassify the doctored image even though it looked the same to a human.

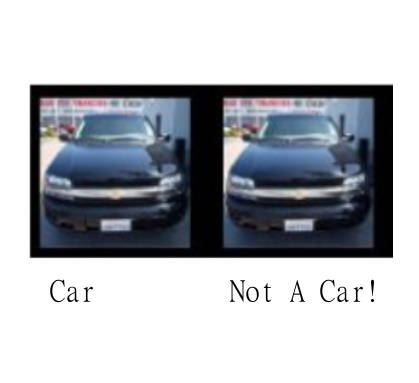

This made neural networks look silly, but the flaw seems harmless enough as the perturbations don't occur in the real world. You can even reasonably speculate that the fact that they do not occur in nature is the reason that neural networks get the wrong answer when they are present. The network never sees such patterns and so doesn't take account of them while learning to recognize natural objects. When people have suggested that adversarial images could be used to attack vision systems, the obvious reply is that to do so would mean that the attacker had physical access to the video feed in realtime. This is not impossible, but slightly unlikely. The latest research from the University of Washington, University of Michigan, Stony Brook University and the University of California Berkeley, however, suggests that we might have relaxed too soon. It turns out that we can create adversarial images that are not like noise spread across the image, but realized as local block changes to the image, specifically to the recognized object, that might possibly be implemented as stickers or graffiti-like paint blobs. The perturbation is restricted to the recognized object because, while untargeted perturbations can modify the background in the real world, all we have that we can modify is the object. That is, if we are trying to perturb a Stop sign we can only do so by adding blobs or graffiti to the sign and not so easily to the entire background of the different images that a camera might capture. The approach is remarkably simple, so simple you might have guessed that it wouldn't work. All you do is use the standard optimization methods used to generate untargeted perturbations, but restrict them to the recognized object. To make sure that the perturbations can be implemented by sticking blocks of color, or graffitti-like shapes, the optimisation is also performed through a mask that restricts it to changing in specific blocky or graffiti-like regions. This is a bit of a simplification and the objective function is also modified to get the sort of result we are looking for - camouflage graffiti using the words Love and Hate and camouflage art of two black and two white rectangles. Once the perturbations were computed the perturbed image was printed out and stuck over the real object. For example, the camouflage graffiti Stop sign looked as it if had had the words LOVE and HATE added to it:

The camouflage art just looks as if some arbitary blocks have been added:

In both cases the stop sign was misclassifed as a speed limit 45 mph sign in 100% of the cases. A similar experiment with a right turn sign converted it into a Stop sign two thirds of the time and an Added Lane sign one third of the time. The experiments were repeated, but with the perturbations printed out as blocks and stuck onto the real signs. The results were similar. It seems that the technique works and it works at a range of viewing angles and scales. The paper's terminology Robust Physical Perturbations therefore seems justified and it can be applied to the real world. So I guess driverless cars really are at risk. What is going on here? That is a good question and one that will probably take a lot more research to answer. It is clear that these perturbations are not the unnatural patterns that we have been looking at before. What seems to be going on here is that the optimization is exploiting the way neural networks work. You see a Stop sign and you are sure it is a Stop sign because you see the letters that make up the word STOP. You aren't fooled by some graffiti or a few blocks stuck on because you recognize the word STOP and use this as a definitive feature of a Stop sign. The sign can be very badly degraded an you would still see a Stop sign as long as you could extract the word STOP from the image. A neural network simply trained to recognize road signs, which is what the one used in the experiments was, cannot read. Specifically it has not been trained to read the word STOP. It recognizes the sign by a set of features which it learns are characteristic of a Stop sign - maybe an upright bar next to a closed O shape. Now if someone sticks a block over the O to make it look more like a C and one under the T to disturb the detection of the bar then recognition is ruined. The optimization procedure is actively seeking these sorts of changes to the features that the network has picked out and it works. It does seem however that this is not the same phenomena as the universal adversarial images that we have met before but more a product of the network not being deep enough and not being trained enough. It the network had seen so many Stop signs that the only thing they had in common was the word STOP then it would probably be more difficult to disrupt - but this is speculation.

More InformationRobust Physical-World Attacks on Machine Learning Models Ivan Evtimov, Kevin Eykholt, Earlence Fernandes, Tadayoshi Kohno, Bo Li, Atul Prakash, Amir Rahmati and Dawn Song Related ArticlesSelf Driving Cars Can Be Easily Made To Not See Pedestrians Detecting When A Neural Network Is Being Fooled Neural Networks Have A Universal Flaw Detecting When A Neural Network Is Being Fooled The Flaw In Every Neural Network Just Got A Little Worse The Deep Flaw In All Neural Networks The Flaw Lurking In Every Deep Neural Net Neural Networks Describe What They See AI Security At Online Conference: An Interview With Ian Goodfellow Neural Turing Machines Learn Their Algorithms

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Wednesday, 09 August 2017 ) |