| CIA/NSA want a photo location finder |

| Written by Alex Armstrong | |||

| Wednesday, 03 August 2011 | |||

|

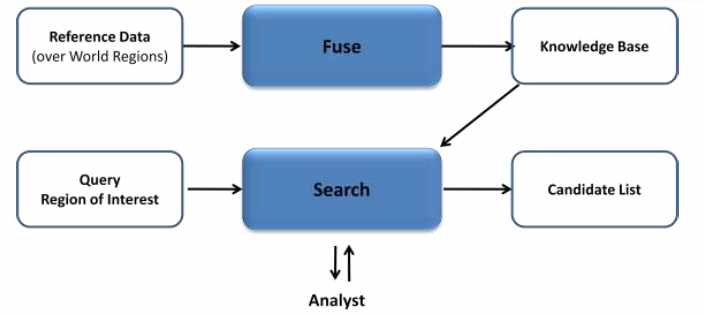

Can you produce some software which reads in a photo and tells you where the photo was taken? This would greatly help in counter-terrorism and IARPA is looking for help from developers. The Telegraph has highlighted an interesting challenge to the programming community. Can you produce some software which takes a photo and tells you where the photo was taken - and anything else that can be deduced from it such as the time or date? The idea is that such a system could be used to locate terrorists from propaganda images but there are also lots of obvious other possible applications such as finding missing or kidnapped people. The project has been proposed by the US Intelligence Advanced Research Projects Activity (IARPA), Incisive Analysis Office which is responsible for speculative research for the intelligence community. The project solicitation notes that digital cameras and video cameras are everywhere but not all have geolocation tags. Human analysts have to work hard to try to fix the location that a photo or a video was taken at using a very wide range of reference data including existing imagery, surface geology, geography, cultural information and so on. This is time consuming and error prone. More importantly it can be very difficult to put a confidence on any location so obtained. According to the solicitation there are already systems in existence that aim to help the human analyst by providing image matching. The problem is that these techniques only work well were there is a high population density and where notable features such as mountains are present in the images. It is implied that tourists are a significant source of images and this raises the question of why resources such as Google street view aren't more used? IARPA also suggests that a multidisciplinary approach is expected involving computer vision, geography, botany, meteorology, geology etc. The task is: to build on existing research systems to develop technology that augments the analyst’s abilities to address the geolocation task. Required technical innovations include the 1) integration of analysts’ abilities and automated geolocation technologies to solve geolocation problems, 2) fusion of diverse, publicly-available, but often imperfect data sources, and 3) expansion of automated geolocation technologies to work efficiently and accurately over all terrain and large search areas. If successful, Finder will deliver rigorously tested solutions for the image/video geolocation task in any outdoor terrestrial location. Given the huge database of photos available on the web this doesn't seem like an impossible task especially as Google and others already have image search algorithms that will retrieve images similar to a target image. However the specification includes details of what government provided data can be assumed. The specification says that the system can produce a candidate list in the first instance and it can interact with a human analyst for guidance - a proposed model is shown below:

One source of information that is explicitly ruled out of play is face recognition. Of course using a face or person to fix a location is a bit like trying to navigate using cars on the freeway as fixed points.... So can you help realize the dream of showing the machine a photo and having it spit out the location it was taken from? If you do succeed the next challenge is building software that hides the location that the photo was taken from your original software... Further readingUS spies plan photo location finder

If you would like to be informed about new articles on I Programmer you can either follow us on Twitter or Facebook or you can subscribe to our weekly newsletter.

|

|||

| Last Updated ( Wednesday, 03 August 2011 ) |