| Data Compression The Dictionary Way - ZIP |

| Written by Alex Armstrong | ||||||

| Thursday, 04 June 2020 | ||||||

Page 2 of 2

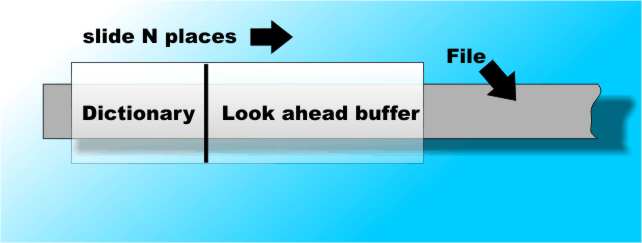

Best Not FirstYou don't just want to accept the first match that you find in the dictionary. Why? Well it doesn't matter what sort of match you get, the token still consists of three elements so obviously you do better to code as many characters per token as possible. Thus assuming that the window has a portion of the file which has already been compressed - the dictionary and a portion we are trying to compress - the look ahead buffer then this makes the first part of the algorithm: 1) Find the longest match between the phrase in the look-ahead buffer and the dictionary and code this as a three-element token P,N,C where P is the position of the match, N is the length and C is the character following the matching phrase. Now what do you do? You have successfully coded N+1 characters and output a token to the compressed file you are building. This means that you have finished with N+1 characters in the look ahead buffer. This is where the "Sliding" part of the sliding windows algorithm comes in. Having coded N+1 characters why not just slide the window N+1 characters to the right to read in N+1 more characters:

The N+1 characters that were in the look-ahead are now in the dictionary. The look-ahead buffer now contains nothing but characters that are yet to be coded so we can repeat the step of finding the best match and issuing a token. But wait, what about the N+1 characters that fell off the left-hand end of the dictionary buffer when the window moved? The answer is that if the window slides over the entire file so that data enters at the right and is coded as it moves from the look-ahead to the dictionary then the data that is lost out of the left-hand end of the window will always have been coded at some time in the past. In this way the dictionary is updated with new characters but all of these have already been compressed and output as a token. So the second step in the method is: 2) Shift the window N+1 character to the right and repeat step 1 That's all there is too it. The final compressed file is just a stream of tokens and you don't need to store a dictionary within the compressed file because the dictionary is just part of the uncompresed file which is built up as you uncompress it. That is the uncompressed file is its own dictionary. Getting Started And DecompressionThere are of course plenty of practical details that I have glossed over in the description but these are the sort of thing that a programmer can easily notice and solve. The main question that must be worrying you concerns how the whole coding process gets going. When the window first slides over a data file there is nothing in the dictionary and data slides in until it fills the look-ahead buffer. At this point the contents of the look-ahead have to be coded before they can pass on to the dictionary - but the dictionary is empty! The simple solution is to use a token of the form 0,0,C to code any character C that doesn't match anything in the dictionary. Notice that using a 0,0,C token actually takes more space than storing the symbol C - so we don't want to use many of these. You should be able to see that the first portion of any file will be codes as a stream of 0,0,C tokens which become less common as the dictionary fills and matches for longer strings are found. The 0,0,C tokens are also the solution to how the dictionary is initially constructed during decompression. Decompression also starts out with an empty dictionary. The first token must be a 0,0,C type and this is used to place a character in the look-ahead buffer, which would now be better named an expansion buffer as each token is read from the compressed file and expanded into the the look-ahead. Notice that the expansion is done so the the phrase is inserted at the boundary between the dictionary and the look-ahead. The expansion works by taking the N characters starting at P from the dictionary and then adding the character in the token to the end. Thus after each token is expanded the look-ahead contains N+1 new characters. These are then shifted into the dictionary ready to help with the decoding of the next token. You can see that the 0,0,C tokens boot-strap the whole sliding window dictionary method into life. There are many alternative ways of doing this however. Why Change The Dictionary?As already mentioned this form of sliding window compression is generally called LZ77 after its inventors. It is remarkably good at file compression because the dictionary changes to suit the section of the file currently being processed. This is good because most files follow a principle of local similarity. In other words, if a symbol or phrase has just occurred it is very likely to occur again somewhere near the first occurrence. For example consider an alternative method of simply taking the first part of the file and using it as a dictionary. The first part of any text is usually not representive of the rest and it would generally not provide a good compression ratio. The fact that the dictionary is dynamic is what makes the sliding window scheme so good. Of course the efficiency of the whole scheme depends on the size chosen for the window. ConclusionSo that's all there is to sliding window dictionary compression. And this is the method that all of the super quick and powerful data compression methods use - PKZIP, Bitlocker and even the QIC-122 tape compression standard. Of course they all throw in their own added extras to make the process more efficient but sliding window compression is the foundation of them all. It is also the basis of other compression algorithms such as some "de-dup" methods that claim to scan an entire file system and remove redundant files. It is also important in computer science as a measure of the amount of information in a string. Even though the principle is simple, tuning for good efficiency is a difficult job. Don't be tempted to implement your own sliding window compression algorithm - there are too many good libraries just waiting to be used.

Related ArticlesInformation TheoryTo be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

<ASIN:012620862X> <ASIN:1846286026> <ASIN:1848000715> <ASIN:1558514341> <ASIN:1584883138> <ASIN:0127887741> |

||||||

| Last Updated ( Thursday, 04 June 2020 ) |