| Synaptic - Advanced Neural Nets In JavaScript |

| Written by Mike James | |||

| Thursday, 23 October 2014 | |||

|

This is a project you need to know about if you have an interest in AI or teaching AI. Put simply it is JavaScript library that lets you implement and work with neural networks. What's special is that this one lets you do much more than a simple introduction. Synaptic is the work of a single programmer Juan Cazala, a computer engineering student in Argentina. In his spare time it seems he likes having fun with JavaScript and has created an open source framework that makes building, training and testing neural networks very easy. You can run Synaptic in a browser or using Node.js and, while it isn't up to working with the huge networks that the likes of Google are playing with, it is fast enough for real work. It is another aspect of the ever-improving performance of modern JavaScript.

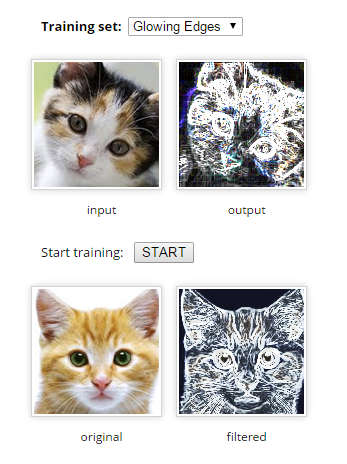

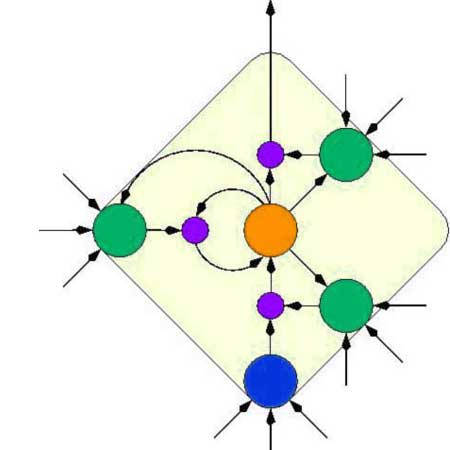

Synaptic implements a general "architecture free" algorithm that can be used to create a wider range of network types than usually encountered. It comes with some predefined networks - multilayer perceptrons, multilayer long-short term memory networks, liquid state machines, and so on. The key difference is that Synaptic allows you to create second order and recurrent networks, which are not as commonly encountered as simple feed forward networks and are traditionally regarded as harder to work with. There are also some nice examples, but be warned, if you try them out you might see an error page due to the author's account being over its CPU limit. This is a low budget production that could benefit from some sponsorship. Once you get beyond the demos then you will have to learn how to create layers of neurons. To train the model all you have to do is provide an input and a target output. You can work with layers or complete networks of layers and even models using multiple networks and more.

It is difficult to give an idea of how sophisticated this all is and the best way to appreciate it is to take a look at the GitHub page. This is a project that is worth supporting as well as using. As the author says at the end of the Read.me: "Anybody in the world is welcome to contribute in the development of the project."

More InformationA generalized LSTM-like training algorithm for second-order recurrent neural networks Related ArticlesGoogle's Neural Networks See Even Better The Flaw Lurking In Every Deep Neural Net Google's Deep Learning AI Knows Where You Live And Can Crack CAPTCHA Google Uses AI to Find Where You Live Google Explains How AI Photo Search Works Google Has Another Machine Vision Breakthrough?

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Thursday, 23 October 2014 ) |