| The Fruit Fly Brain Improves Our Search Algorithms |

| Written by Mike James | |||

| Wednesday, 15 November 2017 | |||

|

The basic hype to this story is "fruit fly brains will improve the search engines of the future". This isn't quite true, but the real message is interesting enough. A study of the fruit fly brain reveals that it is doing a similarity search using a modified locality-sensitive hashing. Under tests it turns out to be a better algorithm. Perhaps neural algorithms are going to be a "thing" in the future and we might have to add a whole new class of them to traditional algorithm courses. A new paper by S. Dasgupta, C. F. Stevens, and S. Navlakha of the Salk Institute and UCSD, A neural algorithm for a fundamental computing problem. Science, 358, outlines how fruit flies deal with smell. (The published refereed paper is shamefully behind a paywall the reference given is to a preprint.) Locality-sensitive hashing (LSH) is a form of hashing that maps inputs that are similar in some sense to hashes that are similar. This isn't generally what a hash function should do because the idea is to spread the inputs as evenly as possible across the possible outputs. LSH, however, is more concerned with finding similarities than it is with storage and retrieval, which is what hashing is often used for.

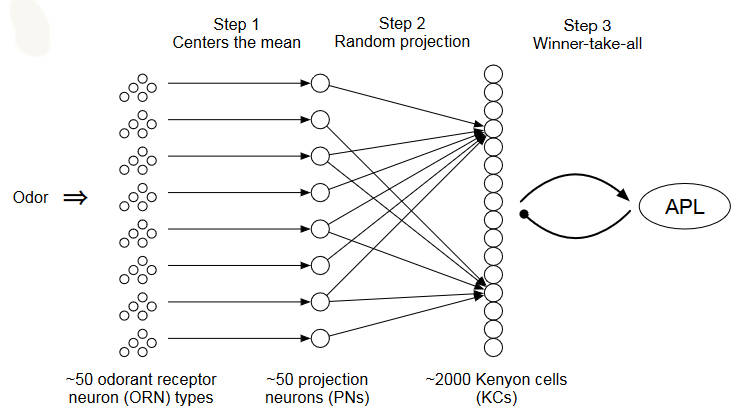

In the case of the fruit fly, the olfactory neurons respond to smells by outputting "tags" that are similar for similar smells. They have 50 smell sensing neurons which fire at different rates according to the input smell. This activity is then passed on to a second set of 50 "projection" neurons which feed into 2000 neurons called Kenyon cells. Each odor, therefore, has a position in a 50-dimensional space that depends on odor and strength of odor. This is then transformed by the projection neurons into a 50-dimensional space where the location only depends on odor and not strength. The dimensionality is then expanded to 2000 dimensions by the Kenyon cells. Each one is connected to a small random selection of about 6 projection cells. The Kenyon cells fire in proportion to their input,s but only the highest-firing 5% are taken any notice of; the rest are silenced in a Winner-take-all (WTA) circuit. The 5% that fire constitute the tag that is assigned to the odor. This seems to work like a locality-sensitive hash function in that odors that are similar produce similar tags. However, this is different from the LSH functions used in similarity search algorithms. It uses sparse binary random projections to expand the dimension of the input, which it then shrinks down to the top 5% dimensions. Implementing the fly's algorithm and testing it against a conventional LSH indicates that it outperforms it using the same computational complexity. Take a look at the video: So we will be using fly algorithm based similarity searches in the future? Perhaps, but this isn't the important point. As the conclusion to the paper puts it: Some of the fly’s ingredients have been used piece-meal before; for example, MinHash and winner-take-all hash both use WTA-like components, though neither propose expanding the dimensionality; similarly, random projections are used in many LSH families, but none, to our knowledge, use sparse, binary projections. The combination of these computational ingredients thus appears novel, and it seems remarkable to us that evolution has discovered them for fly olfaction. Finally, while the fly olfactory system has been extensively mapped experimentally, there is some evidence that the three hallmarks used in the fly’s circuit motif may also appear in other brain regions and species. Thus, locality-sensitive hashing may be a general principle of computation used in the brain.

Of course, it might be that finding pictures of kittens similar to the ones we already have is more important. Drosophila melanogaster. Credit:André Karwath. More InformationA neural algorithm for a fundamental computing problem Related ArticlesJoin The Fruit Fly Brain Hackathon A Worm's Mind In An Arduino Body OpenWorm Building Life Cell By Cell To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Wednesday, 15 November 2017 ) |